You may already know that I am a huge advocate of the Dell R710 when it comes to building lab and test environments or even small office solutions with little budget. My reasoning behind this is that you just cannot beat the amount of cores and RAM that an R710 can hold for the money. For about $700 you can build yourself an R710 with 144GB of RAM and 24 vCPU along with an additional NIC (that’s figuring second hand prices). This specifications are a fantastic value if you are into testing virtualization and don’t want to tear down your environment to put up another.

However, the one caveat with the R710 is that if you place 18 DIMMs in the unit the memory speed will clock down from 1,066 MHz or 1,333 MHz to 800 MHz. It is also a waste, then, to pay extra for the 1,066 MHz or 1,333 MHz memory if it’s going to downclock. I’ve seen complaints about this on various forums and though I have had many clients/environemnts running this configuration without issue, I wanted to find out what the actual, measured difference is in performance. So, I’ve been meaning to move my second ESXi host into my lab but prior to doing that I experimented by running Geekbench 3 with 18 DIMMs and then with 12 DIMMs. I have 8GB DIMMs in my servers so the test is whether or not having only 96GB of RAM is worth the speed increase of having the capacity of 144GB.

Because I am using VMware ESXi 6.0, I wanted to run the benchmark within a VM. I was going to install Windows 2012 R2 on the server directly and test with Geekbench 3 on a physical deployment, but I thought that it may not reflect what most people would be doing with these servers today. Personally, I think that if the speed increase was notable on a physical install of 2012 R2 then it should be reflected in a virtual install as well, but I wanted to keep things in order with what I actually planned on doing. The R710 I did this testing on was only running the single Windows 2012 R2 VM fully updated – vCenter was installed on another host and no other VMs were running on the host at the time. The full specs of the host are as follows:

- Dell PowerEdge R710

- Dual Intel Xeon X5670 2.93 GHz 6-Core/12-Thread CPUs

- 12/18 8GB Hynix PC3-10600R DDR3 1333 MHz ECC Memory

- Intel Pro/1000 VT Quad Port NIC

- Dell PERC 6i

- 8 146GB 15K SAS Drives in RAID50

The Method

I purchased Geekbench 3 for this test so that I could run the 64-bit mode. The testing methodology was pretty straightforward – I started with 144GB DIMMs installed (running at 800 MHz), ran the test on VMs of various CPU/RAM specs, and then did the same tests but after having removed 1 DIMM from each channel so that the RAM would operate at 1,333 MHz. After each benchmark completed I shut down the VM, resized the CPU/RAM, and booted it back up in order to perform the test again. The sizing of the VM was mirrored for both the 96GB-populated scenario as well as the 144GB-populated scenario:

- 4 vCPU and 16GB of RAM

- 8 vCPU and 32GB of RAM

- 16 vCPU and 64GB of RAM

- 24 vCPU and 64GB of RAM

- 24 vCPU and 128GB of RAM (only performed on the 144GB-populated instance to avoid swapping)

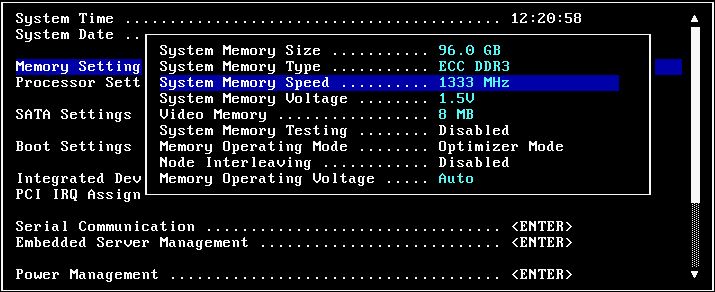

Here you can see that the RAM does in fact report at 1,333 MHz when I have only 96GB worth of DIMMs populated:

My Predictions

I know that RAM operating at 800 MHz is going to put up lower numbers than RAM operating at 1,333 MHz. However, I am not sure how much that will impact actual “system” performance. I had talked to a colleague about this test and one method he came up with was to create a RAM disk and see the difference in throughput which I thought would be a good idea. After looking at how Geekbench 3 does memory testing I deemed this unnecessary because it does basically that – it has some known amount of data that it moves into and out of RAM and measures the throughput. My overall prediction was that while the throughput into and out of RAM may be 66% quicker (by just comparing memory frequency) I suspected that it would make a difference of only around 10 – 20% regarding overall CPU figures (which is actually more important to me given my typical VM workload type). I came up with this number because of how many environments I have worked on that have memory scenarios that match this test and have never been able to quantify the difference. It’s not a very scientific guess, but I figure if it were any more than 10 – 20% of difference then I would probably be able to measure that in one client as compared to another who have the different memory configurations. The size of the memory associated with the VM should not matter as I highly doubt Geekbench 3 is trying to fill all available memory, but I did the tests anyway increasing the RAM allocation each time.

Results!

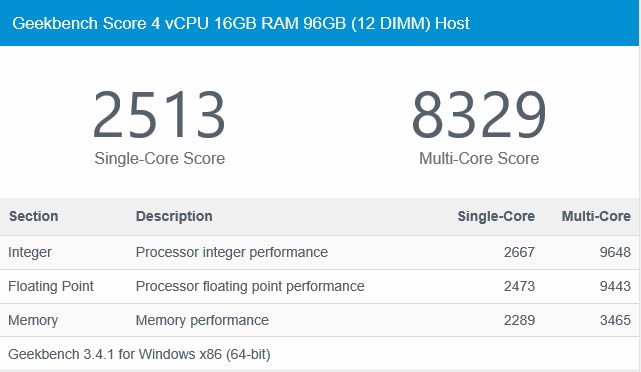

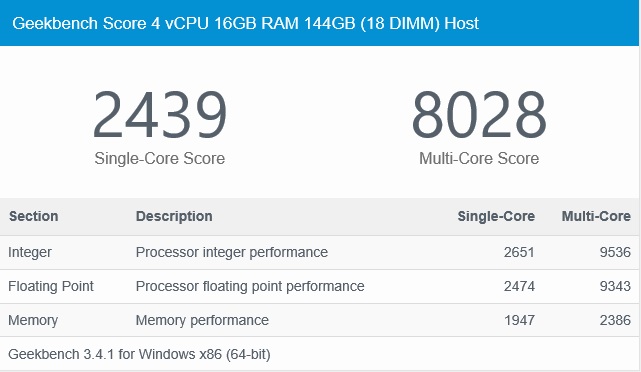

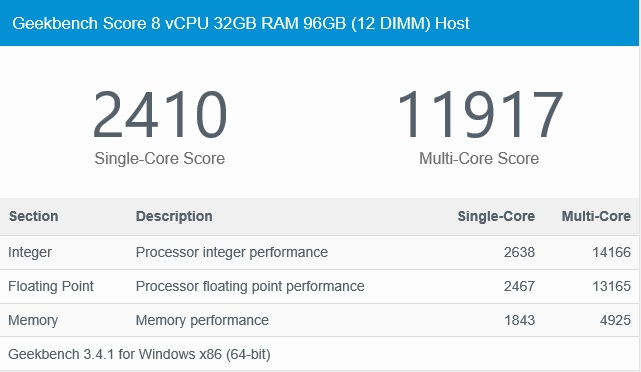

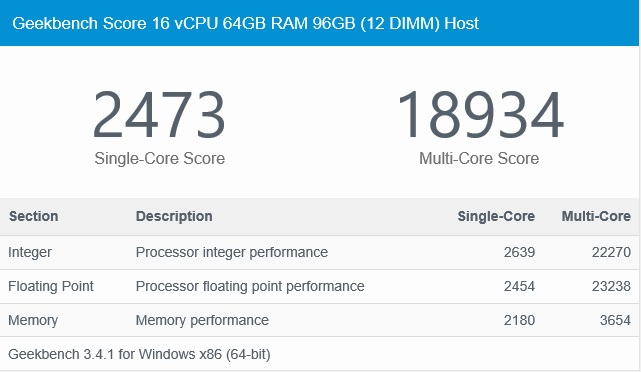

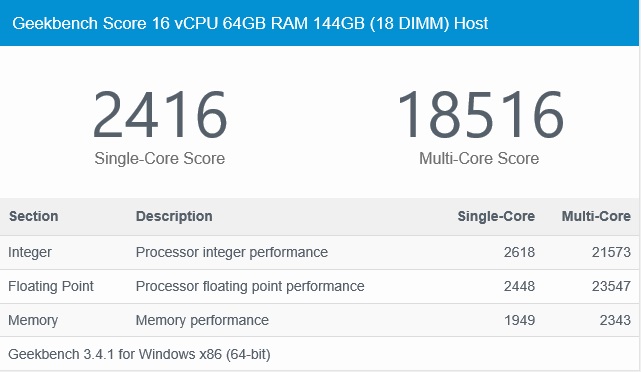

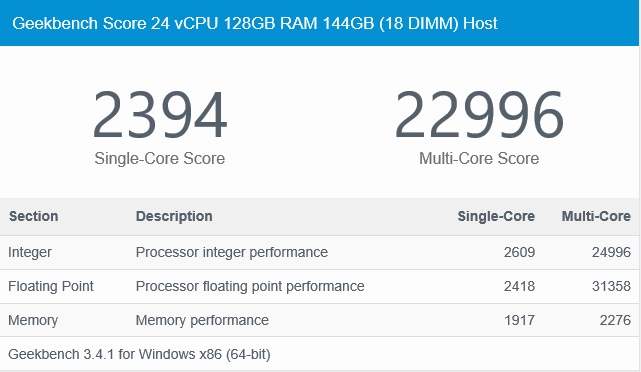

I am going to provide a summary as well as the actual HTML result from Geekbench 3 so that you can compare minute details for yourself. Below are the overall scores that will alternate between the 96GB/144GB-equipped host. I’ve edited the HTML file by changing the chart header name to show the specific test scenario that was run. Clicking on each chart will open the full Geekbench 3 HTML export result:

What does it all mean?

Analyzing the results isn’t too hard – it’s clear to see that having the RAM function at 1,333 MHz does make a difference. However, how you interpret that result is going to be subjective. Because my lab/clients/etc. are full of mostly “general purpose” VMs it is obvious as to why it hasn’t really mattered whether the RAM is running at 800 MHz or 1,333 MHz. However, if you have a very high I/O application that leans heavily on RAM, you could notice a difference. An example of such workload might include a very (very) busy database server.

Looking at the first two charts comparing the 4 vCPU, 16GB RAM VM on the 12 DIMM and 18 DIMM scenario we can see that RAM performance difference is large (these are a point system and not any sort of raw number; for raw figures click the images and scroll to the bottom of the output). For instance, a 4 vCPU 16GB RAM VM backed by 800 MHz RAM only scores 2,386 multi-core memory points while the 1,333 MHz twin scores 3,465! That’s a difference of 45% increase with the faster memory configuration which is almost inline with the percent difference in operating frequency. Makes sense.

However, notice the single-core memory speeds; the 4 vCPU 16GB VM with 800 MHz RAM scores 1,947 memory points while the faster 1,333 MHz version scores 2,289 points. That’s only a 15% increase in speed despite the 66% faster memory clock.

Another thing that’s interesting to note is that the memory points top out at 4,925 when looking at the 8 vCPU 32GB RAM VM yet the 16 vCPU variations fall off in regard to memory performance. This is probably due to both how Geekbench 3 handles more and more cores/RAM along with how ESXi 6.0 handles NUMA and all. I tell my clients all the time that more cores and more RAM does not make a better performing VM by default.

My Conclusions

If you look at each and every single-core memory test the points differ by between 10 – 15%. Remember that Geekbench 3 and other benchmarks like it are considered “synthetic” benchmarks and try and make for absolute cases of “here, try this specific task!” Meaning, it’s not likely that your workload will exactly match either the single-core or multi-core tests performed at all. So, sure, multi-core RAM throughput can benefit by the faster memory as shown by the test, but are all of your VMs running multi-thread-optimized workloads that utilize the RAM exclusively? Probably not. Instead, in the case of an ESXi host where you’re trying to virtualize infrastructure as a whole, you need to make a decision – do I want to stack this particular host (or one like it) with tons of memory and have fewer hosts in the cluster overall, or do I want absolute max performance at the cost of overall capacity-per-host which may require more licensing and hardware to maintain? This is literally the conversation/debate every infrastructure engineer has. I’ve written a blog post previously that discusses sizing ESXi hosts and this is one of the topics I focused on.

For me, because I know my workloads vary greatly and almost none of them have known, exclusive multi-core RAM high IO patterns, I personally think that hesitating to degrade the memory speed to 800 MHz may be a costly mistake if trying to build a lab or small office host on a budget while holding as much RAM as possible. The only thing I’d caution is that this these results show a single VM hitting the memory and CPUs allocated by itself and doesn’t test many VMs trying to do the same. The test, if performed against many VMs, would likely yield lower numbers in each instance, but the margins should be the same between the slower and faster memory speed.

Some Comparisons

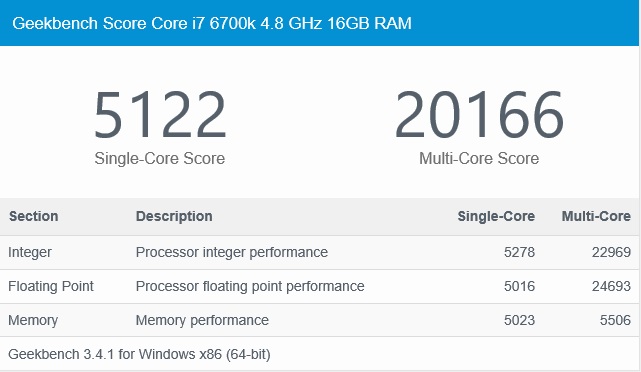

Just to be clear, I am not suggesting that Dell R710’s be placed in production today as “new”, but am merely suggesting that they can still go a long way as virtualization hosts. I recently built a brand new Intel Skylake desktop utilizing an Intel Core i7 6700k CPU with 16GB of DDR4 2,666 MHz RAM. I have the 6700k CPU overclocked to 4.8 GHz full-time and ran Geekbench 3 on it to do a sort of comparison:

Without a doubt the 6700k at 4.8 GHz performs very well, but considering there’s really no way of getting 4.8 GHz in any available server and also considering the cost that went into this desktop and involved water-cooling solution (along with overall low core count when talking ESXi hosts), the R710s really put up some good numbers for what they are. Don’t forget that the R710 has dual CPUs while my 6700k desktop has a single socket, but still, box-for-box, the multi-core integer and floating point scores of the R710 are pretty good! The memory throughput of the 6700k machine is obviously great but it’s running much faster DDR4 memory behind a much faster CPU.

Just to go one step further, I also ran Geekbench 3 on my Lenovo TS140 which is equipped with a single Intel Xeon E3-1246v3 CPU which runs at 3.5 GHz and has 32GB of DDR3 ECC memory at 1,600 MHz. This final test isn’t a great one because my TS140 is also an ESXi 6.0 host and has about 10 – 12 VMs running. I gave a Windows 2012 R2 VM 8 vCPU and 8GB of RAM and ran the test:

The numbers for the Lenovo TS140 above are actually pretty comparable to the 8 vCPU VM running on the R710 earlier in this article. However, as I said, this host actually has a number of VMs running on it that may make a decent difference in overall performance in the VM actually running the benchmark.

I hope you all find this article useful – it was interesting running the many tests and seeing the output. This may convince or sway you one way or another – if you find that this information helps you make a decision on what to build/buy please let me know! I am also going to try and find examples of other machines that may display slower RAM speed as the DIMMs become fully populated and if I can get my hands on one I’ll perform similar tests. Thanks for reading!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

February 21, 2016

I’d be curious what the performance difference would be on a similarly equipped DDR2 host. Also the power consumption difference…

February 21, 2016

Thanks Chris – I’ve got 10 1950/2950’s with dual E5450’s and 32GB of RAM. Unfortunately it’s hard to get the DDR2 generation servers to hold this much memory. You’d probably be looking at something like a Dell R900 (4 socket with RAM risers) or HP DL580 G5. Both are extremely power hungry and loud and don’t support that much memory. I think the R900 and DL580 G5 support 256GB of RAM while the R710 does support 288GB.

February 21, 2016

Hi Jon,

Thank you for your research. I always enjoy reading your blog, good job so far!

If you are looking for blog topics, I think I’ve got one for you 🙂

I’d be interested to know the ‘real’ difference between having single-channel vs multi-channel (dual, tri, quad) memory.

February 21, 2016

Hi Jan – thanks or following! I’d be interested in doing some experiments – do you have any specifics in mind?