It’s been a bit since my last update around here but I’ve been busy both at work and home. Meanwhile, the amount of data I am storing on my home network is growing and growing with the potential to grow to as much as 25TB. However, I am realizing I don’t really have a place to back it up to and as we all know, RAID is not backup. I could purchase Crashplan or Carbonite and pay some money to put my personal data up on shared storage which is usually a pretty safe bet. However, that wouldn’t remedy the fact that I have somehow acquired 21 1TB enterprise hard drives and an abundance of Dell 1950/2950 servers… so, the goal here will be to create something to back data up to on-site with redundancy but not too much expense.

It’s not often you have so many hard drives of decent size laying around, but having taken in all those Dell servers (which I use primarily for VMware testing) and each one coming with a bunch of disks at a time it really added up quickly. I was going to sell them off for a few bucks since they’re enterprise units (and thus have a bit-error-rate of 10^-15) I started thinking that it may be worth it to put together some ultra cheap storage to do disk-to-disk replication. Right now, I am using a DS1513+ off-site to replicate my data to. So, there are times where I am doing VM level backups over a VPN. It works fine but I am being really sparse in what I am pushing off-site as it takes a while. My idea is to backup to some sort of large storage device on-site and then replicate form that unit over the VPN if I need. So, what do you put 15 – 20 disks in that doesn’t cost much, is reliable, and is decently fast?

I started looking at older Dell EqualLogic devices – mainly the PS6000 or PS4000, but they were all going for $700 – $1200. Not only that, but they’re true SANs so they’d have a decent amount of power-draw as they have to do a bunch of processing for iSCSI and all. Going even older, like an EqualLogic PS5000, would cost less but likely consume more power. Since I am only booting the device when I want to replicate the EqualLogics are probably not an ideal choice anyway as they’re really meant to just remain on. I was thinking more along the lines of something direct-attached. I checked out Dell MD1200’s – too expensive. Saw some SuperMicro chassis which are decent but still $400 – $600. Then it dawned on me – the Dell MD1000 is old enough that places are dumping them cheap but are new enough that they support large enough drives to be worthwhile, and since they’re DAS they shouldn’t draw an insane amount of power. We usually make fun of these at work because they’re lost in a sea of PS6100’s and Compellent systems but it’s perfect for my “home use”.

I’ve used PowerVault before – MD1000, MD1200, MD1220, and MD3200i – they’re all pretty decent at what they do… so, why not? Remember, this is secondary replicated data on the cheap – the hard disks are “free” and I have plenty of servers to hook them up to. I just needed a PERC 6/E and theMD1000. 20 minutes later on eBay and I had a PERC 6/E with 512MB of cache for $40 shipped and a Dell MD1000 for $145 shipped coming my way. The most important part of buying a chassis for storage is that it comes with the trays – the unit I ordered came with 15 trays which was perfect. There were some MD1000’s going for $100 but had no trays which are about $10 – $12 each. So for $185 I was able to use 15 of my 21 1TB disks to create an array and have plenty of disks as cold spares.

My unit has dual EMM controllers and power supplies as well, which will let me cable the two PERC 6/E ports to each controller port if I wanted and cable up each power supply for redundancy as well. This isn’t very important to me since I am going to only have this unit on for scheduled replication, but it’s nice to have.

In the photo above you’ll see that there’s an “in” and “out” on each EMM module – they use SFF-8470 SAS ports which is what is also on the PERC 6/E so that’s easy. I have a 2m SFF-8470 cable from former projects so I am good to go there. Below the two EMMs are the dual power supplies. They take typical ATX power cables. No big deal there.

In the photo above you’ll see that there’s an “in” and “out” on each EMM module – they use SFF-8470 SAS ports which is what is also on the PERC 6/E so that’s easy. I have a 2m SFF-8470 cable from former projects so I am good to go there. Below the two EMMs are the dual power supplies. They take typical ATX power cables. No big deal there.

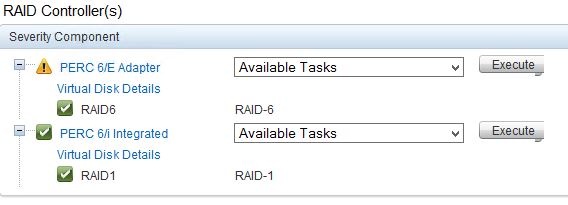

Installing the PERC 6/E is simple as it’s a standard PCIe card. I plopped it into a Dell 2950 I had extra. The specs of that Dell are a little overkill for a backup server but it’s one of the servers that I wasn’t using. It has dual quad-core Xeon E5420’s and 32GB of RAM. I also have 2 300GB SAS drives in RAID1 on the PERC 6/i. I installed Windows 2012 R2 Standard and patched it up. I also flashed the Dell 2950 BIOS, iDRAC, PERC 6/i, and PERC 6/E firmware to the latest versions from within Windows real quick. I use Dell Open Manage Server Administrator (OMSA) on physical servers to observe and configure various aspects of the machine from within Windows rather than booting into the BIOS or RAID setup.

You’ll notice above that the Dell 2950 has a yellow alarm on – I only have one PSU cabled on the server since it’s not relied on for any HA . You’ll also notice that all of the drive lights are lit on the MD1000! This was a relief because after I purchased the MD1000 and PERC 6/E I found some people saying that they couldn’t use SATA drives in the unit without SATA interposers. Interposers are little circuit boards that go in the back of the disk tray that allow some SAS/SCSI commands and features to work for SATA disks. I only have 6 of these interposers but have 15 drives – I priced interposers out on eBay and they’re $10 – $18 each – yuck! I either got lucky or Dell changed support for SATA. Boy was I relieved to see in OMSA that all the disks were recognized.

The next step was simply creating the RAID configuration in OMSA. Because I want reliability over total size, since this is technically the “backup” location, I chose to use RAID6. If you’re unfamiliar with RAID6 it’s really similar to RAID5 but with dual parity. With RAID6 you can afford to lose two disks before data loss occurs – with 1TB disks a RAID5 should be able to rebuild but again I am going to rely on my data being here if it gets lost on my RAID50 pool in my ESXi server. RAID6 has a higher write penalty than RAID5 but that’s the price I’ll pay for being able to not monitor this array as closely.

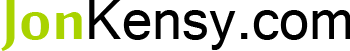

Above you can see that the enclosure shows up properly in OMSA and has all green lights on the EMMs, Fans, Disks, and PSUs. Great!

All of the the disk show up too – above you’ll see that they’re all SATA. They’re varying types of Dell, WD, and Hitachi drives. They’re all 7,200 RPM and 1TB so they work well together.

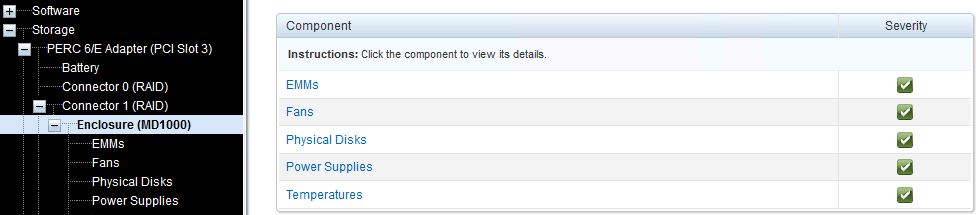

Above you see that I configured the Virtual Disks for RAID6 (with background initialization still running). There are no hot spares assigned even though I have 6 spare 1TB disks left over. I didn’t want to sacrifice total array size by losing 2 disks worth to parity and then another slot for a hot spare. Remember, I am going from ~25TB of space in a RAID50 to this RAID6 array. I am already going to have to not backup about 50% of my data. Background initialization of a RAID6 takes quite some time especially with 15 1TB disks so patience is required.

Above you see that I configured the Virtual Disks for RAID6 (with background initialization still running). There are no hot spares assigned even though I have 6 spare 1TB disks left over. I didn’t want to sacrifice total array size by losing 2 disks worth to parity and then another slot for a hot spare. Remember, I am going from ~25TB of space in a RAID50 to this RAID6 array. I am already going to have to not backup about 50% of my data. Background initialization of a RAID6 takes quite some time especially with 15 1TB disks so patience is required.

You can see that there’s an alarm on the PERC 6/E – the only drag with the $40 PERC card I bought is that the battery is done. No big deal as I can get one for cheap later. I forced write-back cache mode because this is secondary storage. I am going to allow writing to the write-back cache even if the PERC battery is dead because I want the speed. The risk is that if the unit loses power while there is still data in the 512MB cache, then that cached data will be lost before it hits the disks. I am OK with that in this case. If you are going to use this as storage for VMs or as a primary storage device then you’d want to replace the battery first and leave the write-back mode so that it only hits the cache if the battery is present and healthy.

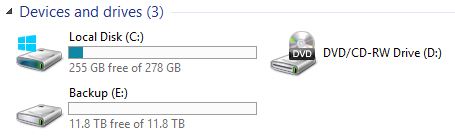

Once initialized and brought online, the 15-disk RAID6 array formats to 11.8TB. Not bad!

Finally, this whole setup is only affordable if it doesn’t use a whole lot of power. When the chassis fires up the fans peak pretty high leaving you with a sinking feeling that this thing is going to suck down serious power – I was fearing 400 – 600W based on the rating I saw on the power supplies. I am planning on only running this MD1000 and 2950 while doing monthly or so backups. If I did end up backing up the full 11.8TB it would take me 26 hours to transfer at 125 MB/s (~1 Gbps without any LACP). I may create a team and see if I can increase that throughput but most of what I am pulling from has only single 1 Gbps NICs.

“Ok but how much power does it use?” I can hear you guys asking, so I decided to test. My kWh rate is slightly higher than actual and is set in the Belkin device as $0.16/kWh. To get a reading for the whole chassis with its dual power supplies, I have a power strip plugged into my Belkin wattage device. Let’s find out what this thing uses!

Above you’ll see that the chassis is pulling about 8-watts or $11.90/year with just the one PSU plugged in – that’s not even turned on!

Plugging in both PSUs and you see 2x the cost for a year or about 16-watts and $20.57. Again, not actually turned on yet.

When I power the MD1000 up via the power switches on the back, it spikes to around 250-watts and $358/year. You’ll notice, though, that the disk lights are not up yet.

Finally, with the disks up and the OS booted you can see it pulls 223-watts or $312/year. Not great, but not entirely horrible either. Having one or two PSUs switched on does not change this value. Also, the disks were doing background initialization so they were busy and doing stuff as indicated by the activity lights. It’s possible the power consumption will fall after the initialization and only rise during actual disk activity.

Justification?

In summary, this isn’t a bad way to go. I figure it’ll take me 30 hours to back up a full 11.8TB. You can calculate that it’ll cost me about $1.07 for each full 11.8TB backup in electricity for the chassis. I am using a physical Dell 2950 for the PERC 6/E interface, but if I kicked this chassis into a VM I could eliminate the electricity overhead of the 2950 also. So, considering the 2950 uses about 250-watts also, a 30 hour backup should cost me about $2.30 once a month at $0.16/kWh for the 2950+MD1000. This doesn’t include the $185 chassis/PERC cost, or disks that I had on hand, but it’s a fair monthly “usage” fee on this – keeping in mind that I only boot it up when needed for the backup. 15 new 1TB disks should cost you about $975 so you can spread that expense out however you want. And, obviously, you can buy fewer larger disks for a better value I am sure but I already had the 1TB units. Figure $185 over a year is $15/month. So, for $17.30 a month for a full backup of 11.8TB of local replication at 1 Gbps and control to get and move the data however you want is not bad. $17.30 a month is with the hardware baked in, too. Compared to the Carbonite unlimited storage “Personal Edition” entry price of $60/year with only file sync and I don’t think it’s a bad deal at all. Confused?

How do we justify a $17.30 a month cost (most of which is the hardware being dissolved into the first year of monthly cost) for replication when there are so many cloud-based “unlimited storage” solutions available? It seems difficult at first but when you actually sit down and figure out what you get it’s not a hard argument to win in your favor. Consider the table I’ve put together below:

| Service | Number of Computers | Amount of Storage | Replication Speed | Cost per Month | Notes |

| Carbonite Personal | 1 | “Unlimited” | No Data | $5 | No Server OS |

| Carbonite Server | Unlimited | 500GB | ~60 Mbps (54.6GB in 1 hour 53 min) | $75 | Server OS, Databases |

| Carbonite Server | Unlimited | 11.8TB | ~60 Mbps (54.6GB in 1 hour 53 min) | $3,027 | Server OS, Databases |

| Self Rolled | Unlimited | 11.8TB | 115-122 MB/s (54.6GB in 7.2 minutes) | $17.30 | Server OS, Databases, VM |

| Self Rolled with Disk Purchase (first year only) | Unlimited | 11.8TB | 115-122 MB/s (54.6GB in 7.2 minutes) | $98.55 | Server OS, Databases, VM |

Carbonite Personal is out of the running because it does not support a server OS. You might be able to make it work if you deploy a desktop OS to a VM and try and backup network shares and such but you lose a lot of ability doing this. Further, I was not able to find anything specifically calling out their throughput speed to upload. It doesn’t much matter anyway as they won’t let you install their agent on a server.

The Carbonite Server plan is more attractive in that it allows Server OS and databases to be backed up. The base plan starts at 500GB, will backup an unlimited amount of servers, upload is tested by Carbonite at about 60 Mbps and costs $75 a month ($900 a year). However, to get this on par with our ability to replicate the full 11.8TB capability for $17.30 a month, we need to consider that much storage on Carbonite. When you bump up the storage to 11.8TB the monthly fee is $3,027 ($36,324 annually). That is… painful but 11.8TB is an awful lot of information to backup however it is not uncommon at all for businesses to retain that at any given point. The second issue revolves around transfer speed which is true with any off-site backup solution. At 60 Mbps it’ll take 17 days to replicate 11.8TB to Carbonite. Now, this is not a totally fair estimate as no one in their right mind would do ONLY full backup replication to an off-site service and would leverage differential or deltas, but I am just being plain here. This also doesn’t factor in pulling the data back down from Carbonite.

So – you save $3,009 a month by building your own storage chassis for replication on-site – great! But, what don’t you get? Well, you need to maintain the device(s), you need to consider an additional off-site location for replication, and you need to consider the reliability of the older devices used in this example. Further, you are going to have hard drives fail and you’ll need to factor in the replacement of those. And again, this is on-site and is replicated it is not archived onto tape and moved off-site or anything. Still, this is better than having nothing. The likelihood that my RAID50 will suffer total failure AND a 15-disk RAID6 will fail is pretty darned low. Remember too that I am considering turning on the MD1000 and Dell 2950 just for the full 11.8TB backup. Carbonite solutions will be a constant availability. If I left the 2950 and MD1000 on all month it’d cost me $67 a month with the cost of the hardware dissolved into the first year. Still not bad compared to a Carbonite solution to do the same amount of data.

Obviously this is all tongue-in-cheek. I am not proposing that a Dell 2950 and MD1000 is a better backup solution than kicking your stuff to Carbonite. I am sure people will think that’s what I am saying, though, so I am spelling it out here! However, if you have no ability to backup a large array at home this is a great alternative to forking out cash to a cloud provider and it doesn’t actually cost you as much as you might expect!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

March 20, 2018

This is a great write up. I have a MD1000 and was wondering how much power it would actually consume so I stumbled on this page.

Do you have any updated consumption numbers?

Have you noticed a hit on your power bill?

Any reliability issues with these units?

Thanks!

April 21, 2015

Well agree on that point,

I copy your idea completely, just with few distinctions.

1) I am from Europe and hardware is bit more expensive on eBay than US, still affordable.

2) I do hate clouds. Still you put data somewhere you dont know who has the access to them and data are from your control. Even when you needed you dont have any security, it will be available that time.

3) I decided to have my own storage some meaningful backups than having the new pricey HOME NAS device for 5-8 drives, which is some kind of box with built-in linux on shitty hardware and for nonsense prices. If it goes brick, youre lost.

4) for that smiley price, you can have more separate places to replicate data you rely on.

What makes the sense is to have professional hardware for silly price and whatever goes wrong, you can buy another piece of it again and rebuild your DAS freely for almost priceless money. In fact, the only pricey are the drives which I have the new WD REDs. But thats the price of the data to put on. But thats for the HOME NAS either. So no big deal.

The only I am seriously thinking to manualy adjust the fans to be less noisy. It can be done with some electronic component to them for few cents.

With OpenManage and R210 server the kind of beauty.

April 3, 2015

If you wanted unlimited storage, just use CrashPlan…

April 6, 2015

Right, they have unlimited storage along with amazon, etc. The problem is actually getting the data there. A website review of CrashPlan did a 10GB upload in 3 hours. Thats about 7 Mbps. Uploading 11.8TB at 7 Mbps would take 21.2 weeks! So, yeah, if you have 21 weeks to run your servers then I guess that’d work lol. CrashPlan is meant for home users that put up data for long term. They may accumulate a lot of data over years. But these “unlimited” cloud based storage solutions are not prepared to handle any bulk data upload and especially not on a schedule.