Update as of Jan 14, 2018: VMware Recommends not applying patch

Unless you’ve been living under a rock lately (which would have been convenient) you probably know about the Meltdown and Spectre vulnerabilities that have caused a huge stir in the IT industry. There are three CVE’s associated with these vulnerabilities:

The purpose of this blog entry is not to explain the vulnerabilities in any manner – if you need to better understand what this is all about I suggest this article by RedHat.

As a result of these vulnerabilities, Intel has released new microcode to provide remediation of the whole “sensitive data in cache” situation by simply not caching data (in summary). The downside of this is that compute performance will suffer. I’ve read people on various communities complaining that, “Intel should just make the patch more efficient so we don’t have performance loss!” The problem with this idea is that the vulnerability is the performance gain we have today (or more like a week ago). The fix is to disable the CPU from caching protected kernel data… so, when the kernel needs to do something (which it does frequently), it cannot refer to cached data and thus the performance impact.

There are many articles out there so far showing people running benchmarks on Windows and OSX but I wanted to know what kind of impact to expect on a ZFS system. Because ZFS is a software-based storage solution that provides redundancy, parity, and compression, it relies on CPU heavily. As such, I thought this would be a great test.

In my lab I have a VM running ZFS on Ubuntu 16.04 LTS. The VM has an LSI 9211-8i storage controller passed through to it and has (9) 4TB disks attached as well as a Micron 5100 MAX SSD for logging. The pool is configured as a raidz2 and has compression turned on (lz4 algorithm). This VM runs within ESXi 6.5 so naturally to do this test I needed to run some benchmarks with and without the Intel microcode applied from within ESXi.

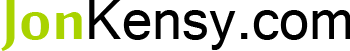

As you can see in the image below, I applied ESXi650-201801402-BG to my host(s). Right now, I have a Dell R620 running the microcode (where I tested the ZFS VM):

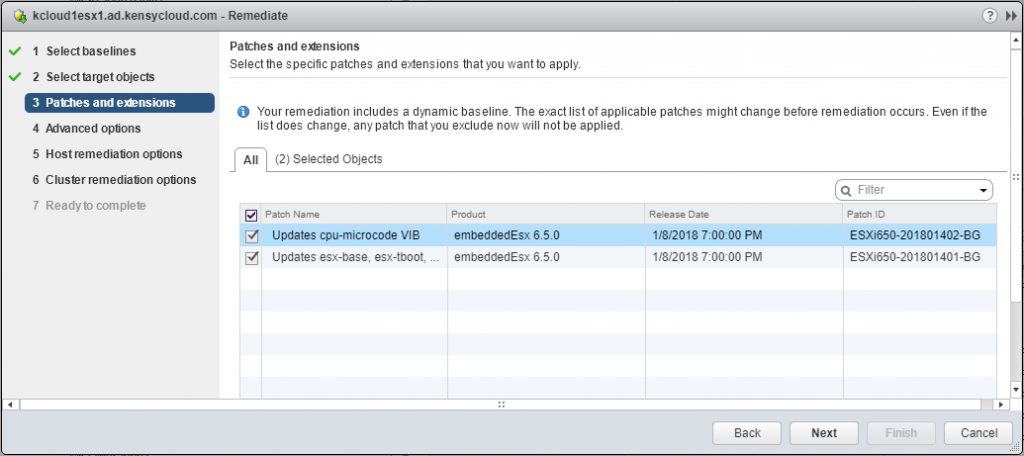

Once complete, you can see that the host has been remediated and is running the microcode:

How this test was performed

For my testing of this ZFS storage VM I created an NFS datastore presented to my host(s) over 10 Gbps and NFS. I then put a VMware IO Analyzer VM on said NFS datastore and ran the following storage profiles:

- Max Write Throughput

- Max Read Throughput

- Max Write IOPS

- Max Read IOPS

VMware IO Analyzer is basically just an OVA of a VM running IOmeter with a web front end. It’s super handy for testing VM storage workloads and is my go-to anytime I need to benchmark or test storage performance. I ran only one instance of each test for 120 seconds so I do not have the average of 10 tests, etc. There were no other VMs touching this storage while testing so there is no background IO hitting the storage that would impact results.

With all of that said, here are the results for the IOPS testing:

As you can see, there are fewer IOPS available after applying the microcode update. This is where I suspected the impact would be most severe. Though, I did expect write IOPS to suffer a heavier hit than what displayed due to the data being distributed across the disks with parity and compression. It did surprise me that the read IOPS were impacted as heavily as they were. You can see above that read IOPS took a 3,391 IOPS hit resulting in about 7.6% performance penalty.

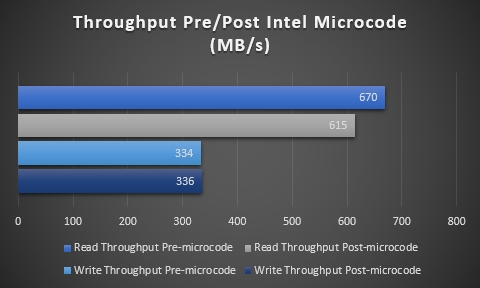

Next up are the results of the throughput testing:

Again the post-microcode testing shows performance loss. I am surprised, still, that writes were not impacted as much (at all, in this case) as reads. You can see that the read throughput took a 55 MB/s hit which is about an 8% performance impact. Write throughput is seemingly unaffected or is at least not apparent in this benchmark.

Remember this is a very brief test but I wanted to see what kind of impact to expect especially on a storage node. I do have a large ZFS node I’ve built (832TB – ZFS on Linux – Project “Cheap and Deep”) that I’d love to get a baseline on with this microcode update but it’s too early to apply that sort of fix to production storage.

My take away is this – for my purposes, I won’t notice 3,300 IOPS or 55 MB/s of throughput. However, maybe the performance impact would really show if I ran a much more strenuous test with a multitude of VMware IO Analzyer VMs pounding away. For production environments where clients demand the best performance possible, a 7.6-8% impact is pretty rough especially since the current tenor of this whole ordeal is one of being cheated out of performance.

Perhaps if a storage node is extremely well-secured, has no VMs running on it, and only presents storage to white-listed hosts then maybe it could go without the microcode update but I want to read more about large scale storage providers and their take yet. I’ve actually read of situations where HPC storage solutions running Lustre have had such high performance impacts that the recommendation from the teams managing them is to not patch. Time will tell…

Thanks for reading and let me know your thoughts!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

February 20, 2018

Thanks jon for this in-depth analysis, very useful especially for those in similar situations