Pump of the volume(s) with Synology DS1513+

Hey everyone!

I am in the process of setting up and deploying a Synology DS1513+ for one of my most important clients (…my mom!). Right now she’s running her software development company off of a couple of desktops (a Core2Quad I built her a number of years ago and a new i7-4770k with 32GB of RAM and 512GB SSD that I built pretty recently). She’s backing up her data with, I believe, Carbonite.

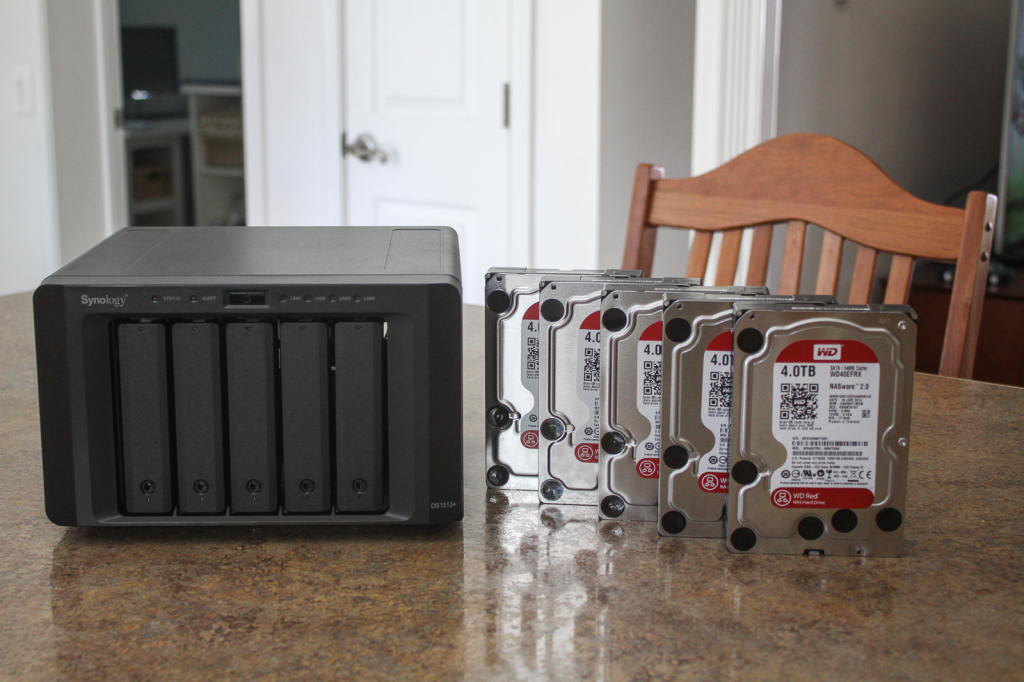

Her desktops and carbonite have been working fine but obviously the local space is pretty finite between the two machines and if a disk did fail (especially in the older machine) there will be a lengthy download process or wait time while Carbonite sends out a drive. So, to alleviate that I’ve set her up with a Synology DS1513+ which is a 5-bay NAS geared to small- to medium-sized businesses. I’ve spec’d the unit with (5) Western Digital Red WD40EFRX 4TB drives. This will result in a total of 15TB usable disk (before formatting)! Pretty impressive!

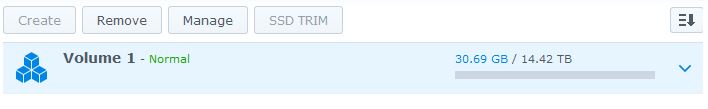

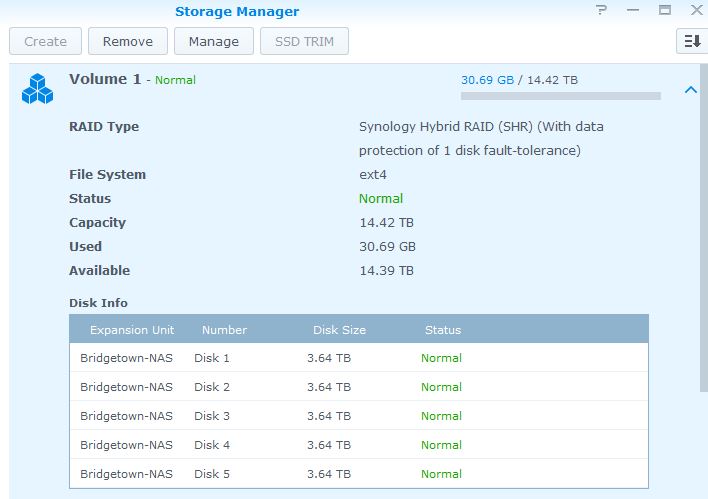

You can setup a DS1513+ with 5-drives in either RAID5 or “SHR” which stands for Synology Hybrid RAID. In a 5-drive configuration they are both configurations are essentially the same. I just chose SHR because if we ever decide to add on the expansion cabinet with different sized disks. You can read more about Synology Hybrid RAID here. So, once the disks are initialized and formatted in an SHR configuration we’re left with 14.42TB as shown below:

One of the reasons I recommend Synology products is because of their software. Their DSM operating system is just awesome to use from both an “easy to configure” and “feature-rich” aspect. You can setup an array and create shared folders without really knowing a whole lot about networking or storage. At the same time, these units allow for iSCSI targets, NFS shares, and advanced permissions to all. Further yet, the DSM operating system offers up packages that convert your DiskStation from a simple NAS into a powerful network node. Packages range from web servers, mail servers, DNS servers, OpenVPN servers, download managers, video surveillance stations, and more. It’s really, really impressive and it takes a lot for me to be blown away.

One of the reasons I recommend Synology products is because of their software. Their DSM operating system is just awesome to use from both an “easy to configure” and “feature-rich” aspect. You can setup an array and create shared folders without really knowing a whole lot about networking or storage. At the same time, these units allow for iSCSI targets, NFS shares, and advanced permissions to all. Further yet, the DSM operating system offers up packages that convert your DiskStation from a simple NAS into a powerful network node. Packages range from web servers, mail servers, DNS servers, OpenVPN servers, download managers, video surveillance stations, and more. It’s really, really impressive and it takes a lot for me to be blown away.

As mentioned, one of the perks about the Synology units its their ability to act as an iSCSI device. This means you can created a LUN and set the DiskStation as an iSCSI disk in windows or VMWare. This is particularly useful because right now the ESXi host we’re using is relying on internal RAID10 storage (1.84TB formatted, of which I had to replace a 1TB Western Digital Black disk on this morning due to predictive failure). This is great, but adding the DiskStation as an iSCSI device will allow for Storage vMotion ability and high availability if we add a second ESXi host some day.

As mentioned, this unit is geared toward small- to medium-sized businesses. The reason I wouldn’t recommend it for large-sized businesses is because it does not offer SAS drive support. So, no 10k or 15k RPM enterprise drives for this unit, but that’s ok because it also isn’t large-sized company cost, either. You could put in Western Digital Black’s or even SSD’s if you wanted, but just not SAS. No big deal honestly. Additionally, it lacks redundant power supplies. Again, not a big deal because the most common reason for PSU redundancy is due to failure – most companies do not have redundant power providers regardless of size. So, your best bet is to put it on a UPS and hope that the power supply doesn’t fail. I don’t think there’s a battery for the controller, so it’s likely that unplugging the device while it’s mid-write is going to lose the transaction.

One area that exceeds expectation, however, is network connectivity. The DS1513+ comes with a 4-port Gigabit ethernet interface that supports link aggregation (LAG) and is compliant with link aggregation control protocol (LACP, 802.3ad standard). This is great because you can spread the 4-ports out over two different network switches in LAGs for speed increase and redundancy. Or, in my case, you can create a 4-port LAG with LACP and get a fault-tolerant 4Gbps connection instead of a single 1Gbps link. This is very useful because 5 spindles in this unit can easily outperform a single 1Gbps connection. Consideration 1Gbps is ~125 MB/sec, and the average single disk can transmit at about 90 – 120 MB/sec… 5 of them would have no trouble taxing a single gigabit link.

Another cool feature of this particular unit is that it supports (2) USB 3.0 connections and (4) USBO 2.0 connections (I haven’t tested the throughput yet), as well as (2) eSATA connections. Most reviews will mention attaching a single eSATA drive to these ports but the truth is they can be connected to up to (2) Synology DX513 external cabinets. So, if you were going to connect two DX513’s you could hold (15) disks in your array. If you created this as a RAID5 (and I would not do this – I would run RAID6 at that point) you could attach 56TB of usable disk to the device. This is pretty crazy! With larger disks coming to market you could probably go even higher. My only reservation is whether or not an eSATA connection is enough throughput to an external cabinet (or two). The eSATA ports are on a daughtboard inside the device that connect to the motherboard via a single PCIe 1x port.

Another cool feature of this particular unit is that it supports (2) USB 3.0 connections and (4) USBO 2.0 connections (I haven’t tested the throughput yet), as well as (2) eSATA connections. Most reviews will mention attaching a single eSATA drive to these ports but the truth is they can be connected to up to (2) Synology DX513 external cabinets. So, if you were going to connect two DX513’s you could hold (15) disks in your array. If you created this as a RAID5 (and I would not do this – I would run RAID6 at that point) you could attach 56TB of usable disk to the device. This is pretty crazy! With larger disks coming to market you could probably go even higher. My only reservation is whether or not an eSATA connection is enough throughput to an external cabinet (or two). The eSATA ports are on a daughtboard inside the device that connect to the motherboard via a single PCIe 1x port.

Enough talk let’s make it work!

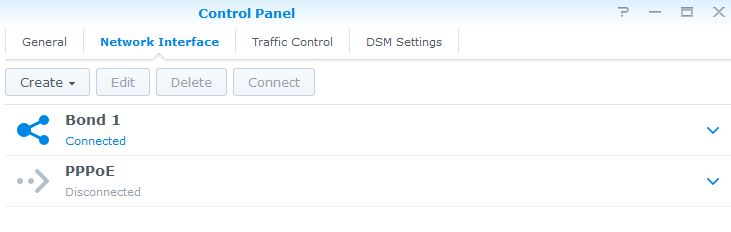

So, the first thing you do is jam all the disks into the unit which is super simple because of the tool-less design. Once done, you simple plug the power and network connections in. Because I wanted to use a 4-port LAG (note: and my network switches support it!) I created a team (called a bond in the DSM software) on the NICs:

When you first create the bond, you have to choose some settings such as the type of aggregation you want and the number of interfaces in the bond. You’ll notice my bond is called Bond 1 – you can have Bond 2 also if you only add two interfaces in the group. So, I selected an 802.3ad LACP configuration on all four network interfaces:

When you first create the bond, you have to choose some settings such as the type of aggregation you want and the number of interfaces in the bond. You’ll notice my bond is called Bond 1 – you can have Bond 2 also if you only add two interfaces in the group. So, I selected an 802.3ad LACP configuration on all four network interfaces:

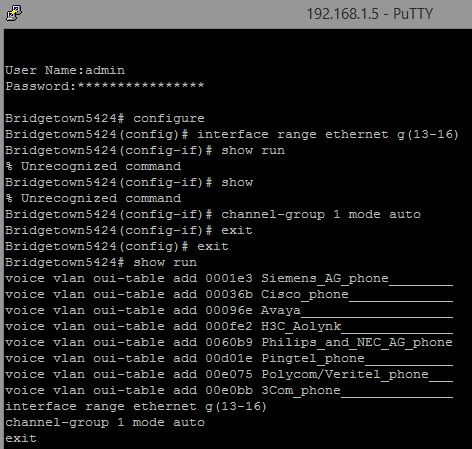

Once that’s done the device will say that the 802.3ad configuration is failed and that’s because the unit is plugged into ports not configured in an LACP LAG group yet. I know that I have ports 13-16 open on a Dell PowerConnect 5424 so I ran the following commands:

Once that’s done the device will say that the 802.3ad configuration is failed and that’s because the unit is plugged into ports not configured in an LACP LAG group yet. I know that I have ports 13-16 open on a Dell PowerConnect 5424 so I ran the following commands:

You can see that the LAG group (1) is setup for ethernet ports 13-16, perfect! That’s all there is to it. Oh, actually, it’s not – you’ve edited the running configuration but if the switch were to reboot it will not load this change! You need to do the following:

You can see that the LAG group (1) is setup for ethernet ports 13-16, perfect! That’s all there is to it. Oh, actually, it’s not – you’ve edited the running configuration but if the switch were to reboot it will not load this change! You need to do the following:

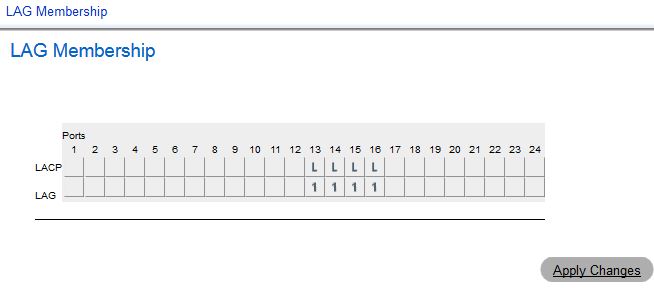

It’s as simple as typing in “copy running-config startup-config” and you’re done. I’ve been bit by this a few times in past work. If you don’t trust yourself in a console you can configure this through the OpenManage GUI for the switch (but that’s for weenies) – we’ll just confirm the settings are there:

It’s as simple as typing in “copy running-config startup-config” and you’re done. I’ve been bit by this a few times in past work. If you don’t trust yourself in a console you can configure this through the OpenManage GUI for the switch (but that’s for weenies) – we’ll just confirm the settings are there:

And there you see ports 13-16 are configured for LAG group 1 and have LACP enabled. Done!

And there you see ports 13-16 are configured for LAG group 1 and have LACP enabled. Done!

Now your DiskStation should no longer complain that LACP/802.3ad is in a failed state. Because I’ve got 20TB worth of disks in this device, all that is left is to let it initialize the volume. It takes a while, but eventually the volume will show “Normal” and you’ll be done. You can create shares and move files while the volume is being validated, though:

I believe this process took about 2 – 3 hours with these disks. I then updated the DSM software right after and copied over a bunch of ISOs that are frequently used. So, right now, we’re only using 30.69GB of… 14.39TB. Pretty funny. The plan is to move this DS1513+ to the same rack as the Dell 2950 ESXi host and create a 2TB iSCSI target for VMs to live on with RAID5 (SHR) redundancy.

I believe this process took about 2 – 3 hours with these disks. I then updated the DSM software right after and copied over a bunch of ISOs that are frequently used. So, right now, we’re only using 30.69GB of… 14.39TB. Pretty funny. The plan is to move this DS1513+ to the same rack as the Dell 2950 ESXi host and create a 2TB iSCSI target for VMs to live on with RAID5 (SHR) redundancy.

Look here again for another tutorial/guide on setting up the iSCSI connectivity soon!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!