These days I have found that my homelab has transformed from inconvenient to sufficient and back again. By this, I mean that I once had a cluster of Raspberry Pi’s running web servers which evolved into my Lenovo TS140 ESXi host build that ultimately evolved to the multi-site vSphere environment with over 100TB of storage and 800GB of RAM! But, through this evolution one thing has been my focus: capacity – compute, RAM, and storage. I’ve built the platform out over time so that I can lab without being confined but about 4 – 6 months ago something bad happened. My Supermicro SC846 server, which had an Intel S2600CP2J board powering it at the time, experienced a hardware failure. The motherboard decided to no longer post and I had about 30TB of lab, some of which was literally piloting concepts for a couple client migrations I was planning. Of course I also run some other peoples “production” websites, etc. off of this setup (with full disclosure). Because I have 20+ disks in the SC846 chassis running things, I can’t simply remove them and drop them and the HBA into a desktop, etc. I acted as fast as possible replacing the motherboard (this time with a Supermicro X9DRI-LN4F+) but the reality is that my lab was down for about a week. Fortunately I use vSphere Replication for all things important and failed critical things over to my other lab, but if only I had backups and more than one host in this environment!

Challenge accepted

Putting pen to paper I realized that if I wanted to do this right (as right as homelab can be, anyway) I had a few requirements to meet:

- New host(s) need to be E5-26xx v1 or newer to match existing Supermicro EVC mode

- Have enough bays to fit at least 12 – 16TB (minimal) worth of disks for backups

- Support an HBA/backplane configuration to run ZFS either on bare metal or VM with HBA pass-through

- Support 24 DIMMs of memory in the event I want to make this a killer ESXi later

- Have PCIe slots free to allow installation of 10Gbps adapter(s)

- Must be relatively affordable (subjective)

So, of course, once I started thinking this over my mind went the way of the already-existing Supermicro SC846 host I built. But, between the motherboard, chassis, SAS2 backplane, and power supplies I bought, the price was much too high as a “secondary” server. That’s when I started looking at Dell R620s and other similar Sandy Bridge systems. I called a few wholesalers up and found that I could get a 10-bay Dell R620 with modest E5-2620 v1’s, no RAM, no disk controller, no network interface, and one power supply, for $350 plus shipping. Deal! I can add RAM I have laying around, and order the NIC I want as well as the 10 GbE adapter, etc. and have a sweet 1U second ESXi host that will run ZFS with an HBA passed through providing direct access to the 2.5″ backplane!

There she is in all her beauty. Again, this system isn’t in the same state as when I received it for $350 – that would be kind of boring to blog about. I’ve added RAM that I had sitting around for another project as well as disks, HBA, and network interfaces. I think this build is going to surprise people… or at least I hope it does. Try and make it through the whole series if you can.

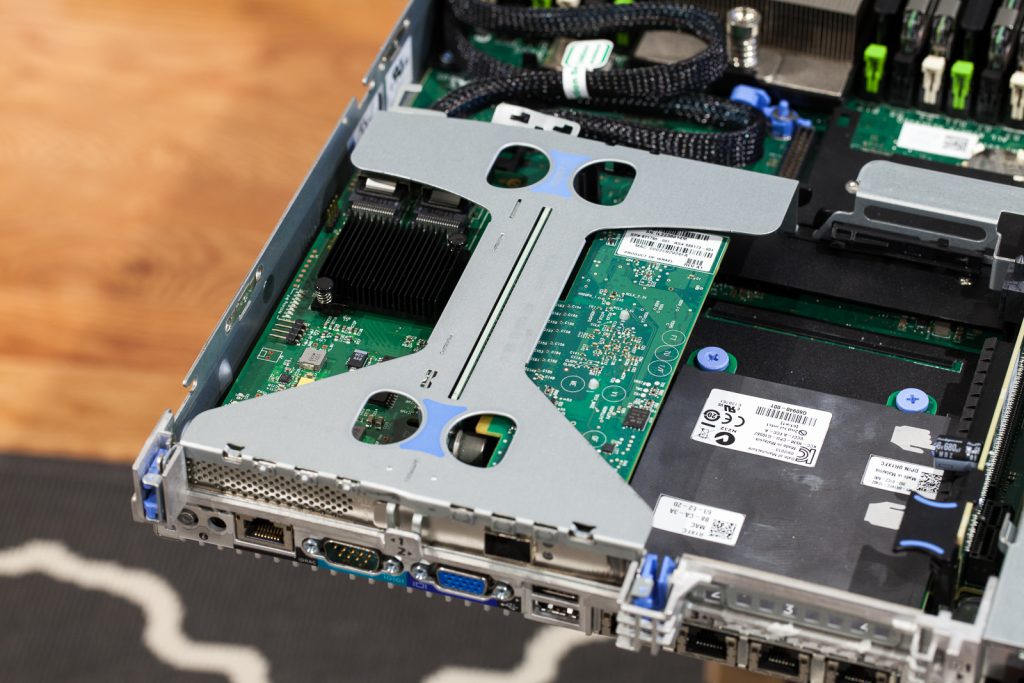

The first thing I added upon receiving the R620 is a network adapter:

The R620 uses a daughter card configuration for the primary network interface. This is the first generation that Dell did this. I love the convenience – previously you were stuck with whatever adapter was spun onto the motherboard and had to add adapters in PCIe slots if you needed something else. I am using the Dell R1XFC Intel i350 1GbE Quad Port daughter card that I found for about $20 on eBay and you can also use combination cards that have a mix of 1GbE/10GbE interfaces but you can expect to pay about $120+ for those. The nice part is that those cards use the X520 and X540 Intel chipsets to provide 10GbE connectivity via 10Gbase-T or SFP+ depending on what you need. You can also find a Broadcom 1GbE Quad Port daughter card if you’d like to go with that chipset. Super flexible.

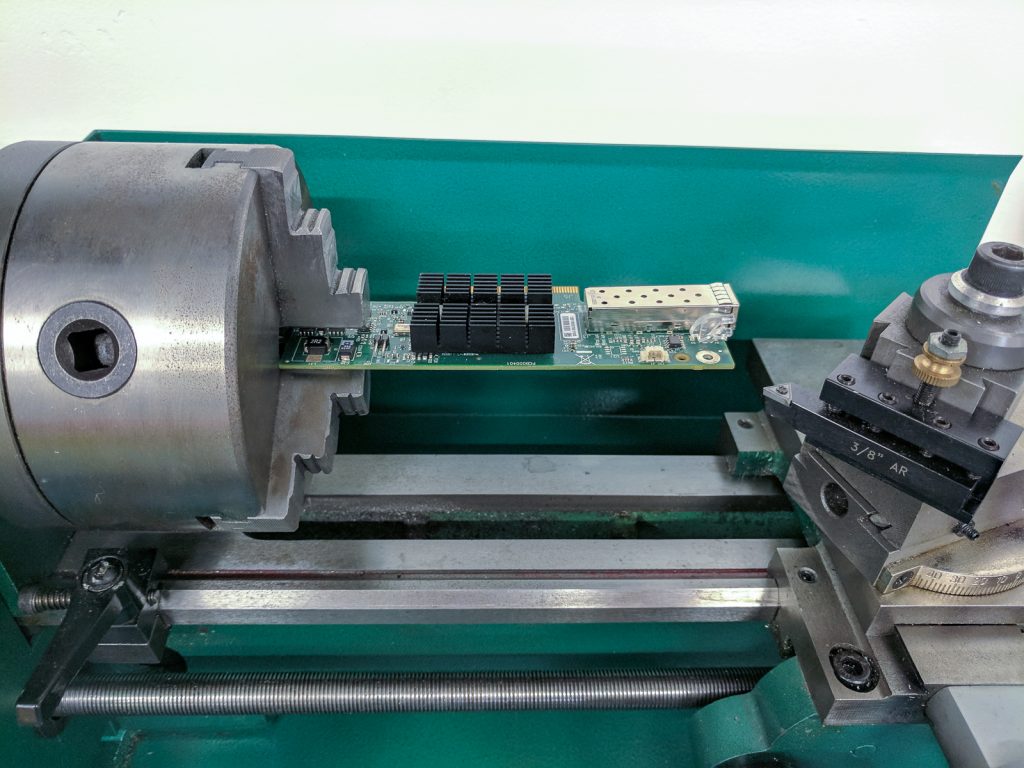

After settling on the main network adapter, I knew I wanted to put one of my spare Mellanox MT26448 Connect-X 10GbE SFP+ half-height cards in this box. I picked this card up on eBay for about $20. The only drag is that the R620 10-bay chassis comes with 3 half-height PCIe slots and I could not find a half-height bracket for it after searching. I’d either need to buy the 2 Port version for $100+ or get creative. I chose the latter, of course:

What the… … just kidding, lathes were not involved.

You can see above I have an existing half-height PCIe bracket as reference and the original full-height bracket removed. It’s not a matter of just hacking off the end because then there’s no way to fasten the card into the system and it’ll move out of the PCIe slot when inserting a cable. So, instead, I cut off the original 90-degree portion and moved it. This is when I also realized I needed to flip it over.

I chopped the 90-degree part off andTIG welded it very carefully in place. I then cut off the remaining straight portion once I was happy with it. Here is the end result:

Boom! Probably the only half-height Mellanox ConnectX single-port card you can find on the internet right now! If you do manage to find half-height brackets do share because I know I found a lot of people on forums searching without solutions.

Now that we’ve got the network connectivity sorted out we need to pick an HBA for controlling the 10-bay backplane. I want to use ZFS on Linux for this project (do you see a trend of that on my blog lately?) since I have had such good experiences with it in the past. I love how flexible and reliable it has been. Naturally, I picked the cheapest/most typical HBA and that’s the Dell H200/LSI 9211-8i. I personally went with an actual LSI 9211-8i so as to not have to mess with flashing firmware for true IT mode but I have used H200’s in the past as well without any issue.

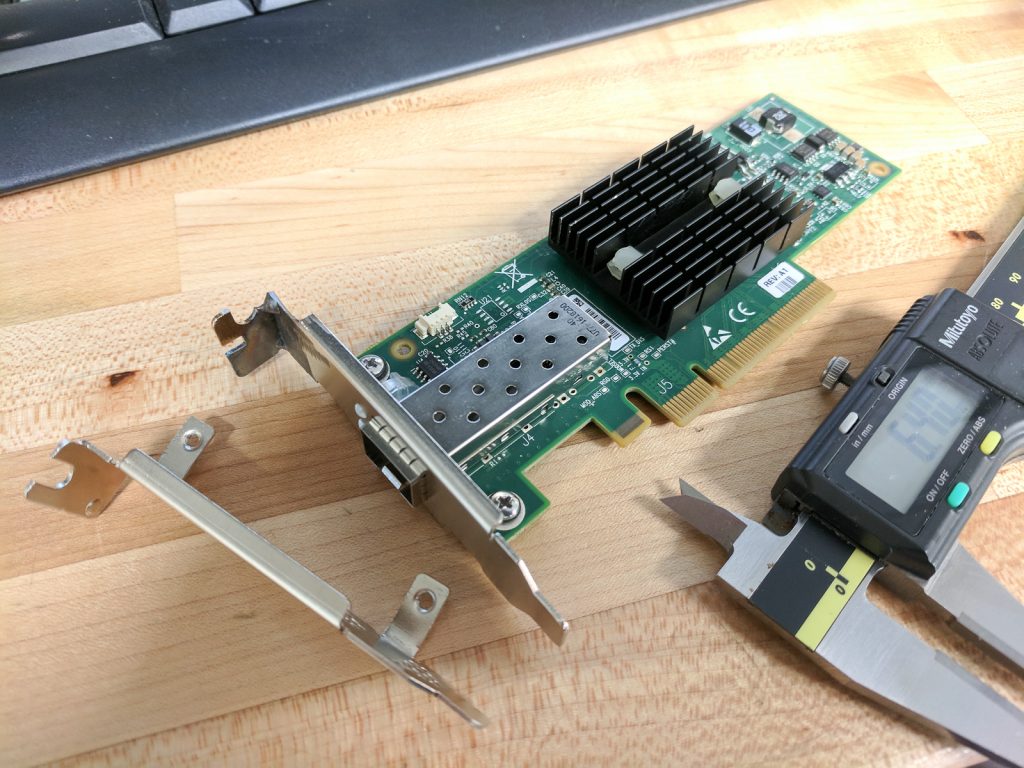

Fitting the HBA into the chassis wasn’t hard but it’s also not the prettiest. I probably should have taken apart the PCIe riser and cut this portion away to make it pretty but I was lazy.

You can see that the cables come off the back of the HBA 180-degrees so the sheet metal was in the way. I could trim it and make it nice but the truth is there are no sharp edges with it bent and I can bend it back if there is ever a need.

Now we’ve got our 1GbE daught card, our 10GbE PCIe card, and our HBA all installed:

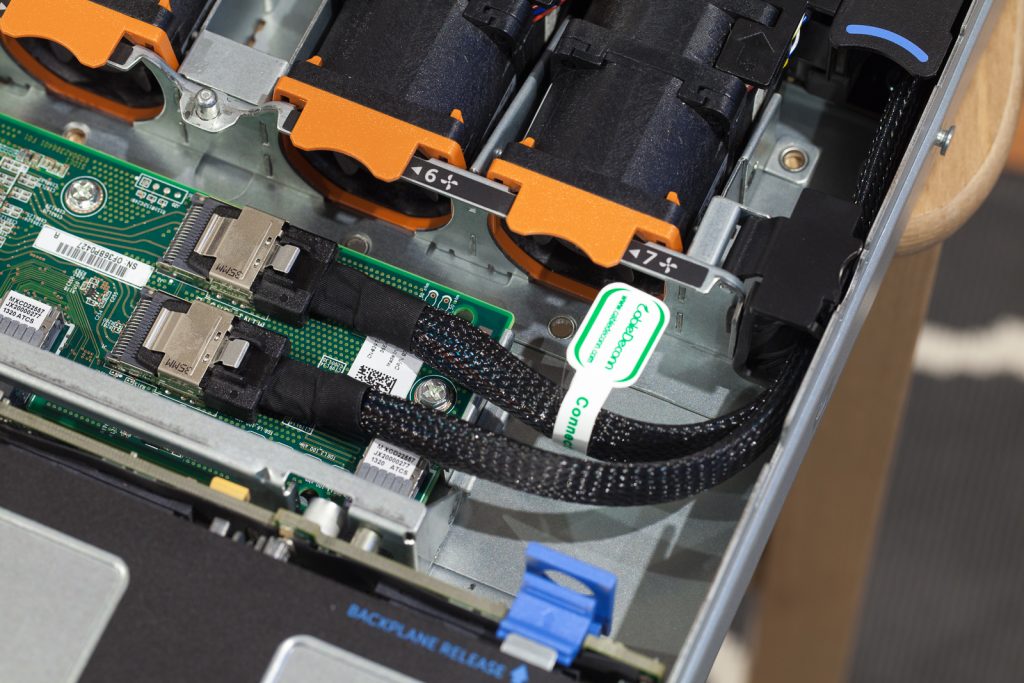

For cables from the HBA to the backplane I just used ordinary SFF-8087 to SFF-8087 cables. I originally bought cables that were 1/2″ too short. Ultimately I had to use 0.7M cables which are a bit long but I was able to bunch the cable up and fit it nicely:

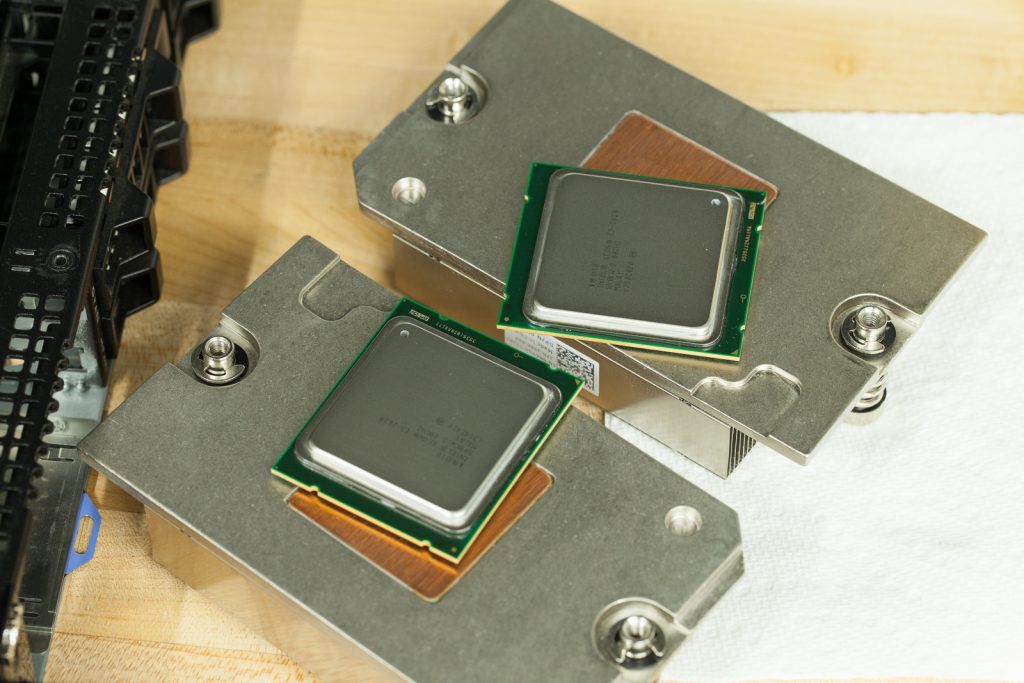

Next up is the guts of the thing. As mentioned earlier, I went with the cheapest CPUs possible to get this project rolling. Because the Sandy Bridge-EP platform has such decent offerings, even on the low-end, I ordered this R620 from my wholesaler with E5-2620 v1 CPUs. These are 6-core/12-thread 2.0GHz CPUs. They’re nothing to write home about, but the core count isn’t bad for a lab and they’ll work in concert with my other host which has E5-2670 v1s. As with all of my server builds, I clean the gross Dell thermal compound off and clean the dust/grime out of the heatsinks so as to keep them as cool as possible so fan noise can be at its lowest:

You can see that someone used a whole ton of thermal paste. Because I asked for these CPUs, I suspect that the wholesaler did the install. A little too heavy for my taste – glad I checked.

Ah, all cleaned up. I use Goo Gone and let it soak into the original thermal paste. I clean it up with paper towels as best I can. I then go back with isopropyl alcohol and paper towels until I can wipe the surfaces and not see any silver/grey coloring on the towel. I then add my own choice of thermal compound called Noctua NT-H1. A little goes a long way:

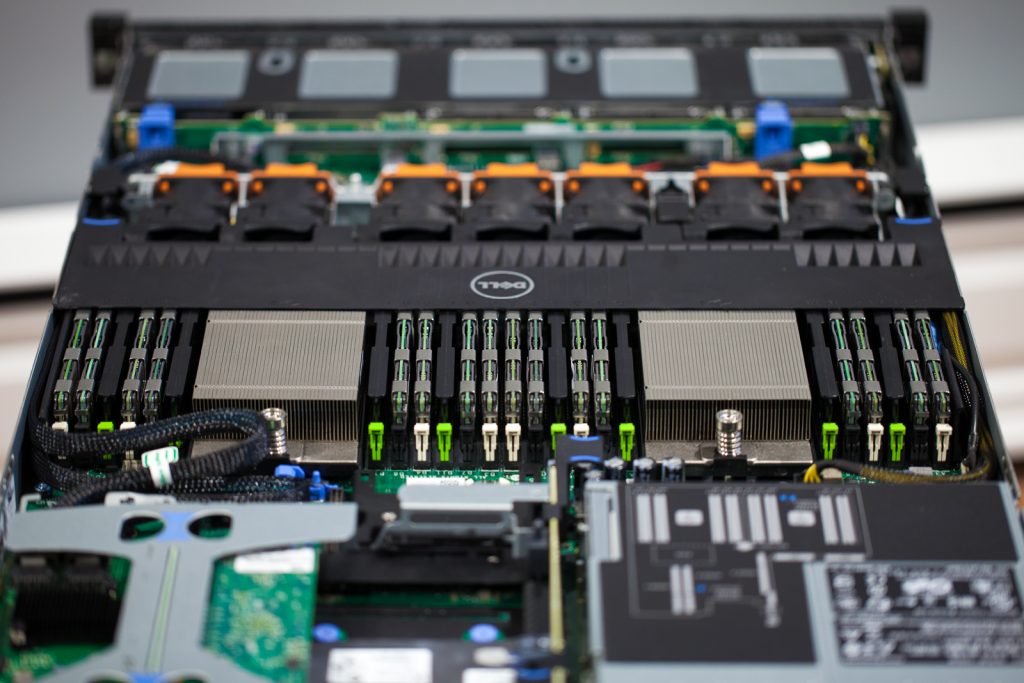

With that all buttoned up the only remaining hardware inside the chassis is the RAM. I had 64GB (8x8GB) of 2Rx4 PC3-12800R DIMMs from another project to use. But, 64GB is not a lot if I want to make this box an ESXi host and the ZFS VM for additional duty other than backup purposes. To get more memory to match would be pretty expensive as the 64GB PC3-12800R RAM alone cost $300+ on eBay. So, instead, I picked up 256GB (16x16GB) of 4Rx4 PC3-8500R memory. It’s slower and it’s Quad Rank, so I can only use 16 DIMMs per box, but I was able to get the whole bunch of memory for about $500. This is one of the most expensive parts of the build but I realized I wanted to have two hosts with the same amount of memory so I can HA the VMs between (assuming the storage is shared) without having to compromise by shutting things down:

It’d be nice to drop another 8 DIMMs of this memory in, but the chipset does not support having more than 16 Quad Rank DIMMs in the system… so for now, 256GB is enough. We’re done going over the inside bits! Oh, to keep it simple I just install the hypervisor to a USB thumb drive on the R620 internal USB port. Easy enough!

This is great but I am here for the storage!

Yeah, I hear you so let’s move on.

This is where I am fully prepared to take some flack. Clearly this thing has 10-bays. So the only possible way to get to 40TB within this box is with 10 2.5″ 4TB disks. But, right now at least, there are no 4TB 2.5″ Enterprise SAS/SATA/NL drives available. So, what gives? Ok, if you’ve got a drink take a sip and if not make yourself a stiff one because I did something that even I wouldn’t recommend (at least at first).

It turns out that Micro Center sells the Seagate Backup Plus 4TB Portable Hard Drives for $99 – $109 in-store. Two things about what I just said make my stomach upset – A) Seagate B) Aren’t these the Shingled Magnetic Recording (SMR) drives that totally suck? Yes and yes. Seagate is never my first choice and I hate the idea of SMR in terms of performance but maybe we can address that. However, remember, the whole point of this is to build capacity into 1U for a pretty cheap price while also providing a decent hypervisor underneath. I originally purchased 6 of these disks and removed them from the USB enclosures and installed them in the Dell R620:

Wait – that’s not a Seagate 4TB disk on the end. You’re right – that’s a Micron 5100 MAX 240GB SSD! If you follow my blog you might recognize that part. That’s because I also used this SSD in the Project Cheap and Deep 832TB Supermicro ZFS build for the OS install. Ordinarily, I’d use Intel S3710 400GB SSDs like I have in my other lab host. However, due to the nonsense going on in the flash industry right now, those drives are $350 used and $600+ new! I reached out to a few suppliers and found that the back order was too long for my plans. I actually found that CDW had this drive for the $30 less than other guys and in stock with no back order. I don’t really prefer CDW and would much rather support the local guys, but cheaper and sooner made it easy. I chose the Micron 5100 MAX because of its high write-endurance (5 DWPD) and low latency which will be necessary in this application as I am using it as a ZFS SLOG device – if you want 550 MB/s throughput for your desktop this is not the SSD you want.

Since the beginning of this build, I’ve added 3 more of the Seagate 4TB 2.5″ disks for a total of 36TB RAW (~32TB usable in the ZFS configuration I am using – remember this is for my backup tier, so I will be using 9 4TB drives in raidz (understand the repercussions before doing the same) and have the single Micron 5100 MAX 240GB SSD installed as SLOG in the ZFS Pool. Ordinarily I’d mirror SLOG devices, but remember, this is supposed to be backup storage that could provide some capacity and IO to VMs if needed. If you want to create a super high performance solution don’t use SMR disks and do yourself a favor and mirror your SLOG. If you want to get the full 40TB RAW in this box obviously you need 10 4TB disks. To put an SLOG in front of that would mean using a PCIe NVMe SSD and fortunately the R620 still has one slot remaining!

That’s where I am going to wrap up this article for now. It might seems as though I came into this positive and excited but left off kind of flat what with the disdain for Seagate and voicing my dislike for SMR disks. However, I am going to follow up with the ZFS configuration and actual performance of this thing in the next article. Of course as a compute node you guys should all know that, with exception of the slower CPUs, this thing rocks. The disk performance is probably where most people are concerned and rest assured I am going to follow up on that!

As always thanks for reading and stay tuned for the next part!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

November 2, 2017

If i might ask, are you planning on running ESXi as a base Virtualization system, then have a VM with the Drives on passthrough to provide the backup storage? any reason if thats the case in going that route? and not have debian/ubuntu/ as a barebone system?

November 2, 2017

Hi Christoffer – I do run ESXi on this machine with a VM and HBA passed through. The only real reason is that I can’t totally justify running a dedicated Linux machine for storage alone. The R620 is such a potent machine and just begs to become a hypervisor – I had the RAM handy and the CPUs have decent core count so it makes the most sense to maximize my virtual capabilities in the lab while also gaining the storage.

November 4, 2017

Yes i do agree that it would be a a waste if you did not utilize all the power. What’s the power consumption of that machine with all the disks?

will you also post a part 2-3 where you show some configuration aspects of it?

What program will you use for backup, VDP/Veeam/ZFS Replication?

I am looking into a backup solution myself, hence i am deeply interested in what you bring on the blog!

Regards

November 12, 2017

KVM? SmartOS? Don’t get me wrong, I’m a fan of ESXi, but there are other options out there. 🙂

November 13, 2017

Agree Brian, ive tried KVM, XenServer as well as proxmox. neither of those three did i find had the features and usability of ESXi 🙂

also we do use ESXi at my work so it gives me the oppurtunity to tinker and understand it on another level then i was previously.

November 14, 2017

Agree!

November 14, 2017

At the end of the day the vSphere Ecosystem is, in my opinion, unparalleled. Can definitely get by with KVM/Xen/Proxmox if needed… but VMware has created such a vast platform. A lot of my clients use vCenter and ESXi and that’s the end of it. But the ability to combine vRA, vROPs, NSX, vCD, etc… there’s so much more to be had. That’s why I continue to roll with vSphere 🙂

October 24, 2017

Good work! I had few questions.

With the replaced PCI-E HBA do you have full support for drive LED indication and hotswap on the backplane? Why not get an entry level PERC like H310 which could support JBOD and just install it in the mini PERC slot? Could be had for under $40 in ebay.

Looking forward for an update on how things go with this build 🙂

October 25, 2017

Hi Dhiru – I had the LSI 9211-8i from other projects available and to keep my R620 purchase as low as possible I did not include any storage controllers. The H310 by default is a hardware RAID controller so it’d need to be flashed with firmware to IT mode. Most of the H310 mini cards are $60 – $100 on eBay. Just a cost thing and what I had on hand. Oh, and yes, the LEDs and hot swap all work with the 9211-8i card.

October 22, 2017

I had to do something similar with my home lab I built a while back. I had purchased a Dell PowerEdge C6105 and picked up 6x cheap Connect-X2 VPI cards (dual port 40G IB). It would have cost me more than I paid for the cards to get low profile brackets for them. I just whipped out the hand brake and tin snips. Cut/bent the brackets. Wasn’t pretty but it worked.

October 22, 2017

I wonder if you can still consider this a home lab anymore. I used to try to keep up with my own lab, but stopped after three R710s and one 12 bay R510. Still don’t have disks for the R510. That’s a great deal for the R620. Are you able to share which wholesaler you bought from?