I’ve been using VMware ESXi for about 5 – 6 years now from my first deployment of ESXi 4.0, then a fresh install of ESXi 4.1 and on to 5.0, 5.1, 5.5, and now 6.0 GA. In that time, I have followed along with the various VMFS changes and upgrades. Most notable was the VMFS-3 to VMFS-5 upgrade with unified, 1MB block size. VMFS-5 opened support for VMDK’s and (virtual-mode) RDM’s of 2TB (less 512B) for ESXi 5.0/5.1. In ESXi 5.5, however, the maximum VMDK/RDM size limit went up to 62TB. Awesome!

As one of our clients is going to be moving over an inordinate amount of data, I’ve been anxious to see where we go with huge VMDK’s and RDM’s. Up until this point in my experience with ESXi I have never had a virtual machine with a VMDK or RDM attached larger than 2TB. In fact, I am not sure I’ve actually had any virtual machines with VMDK’s much over 1TB. That’s not to say I haven’t had virtual machines with more than 2TB of data attached, because I have, but it’s been through iSCSI LUNs connected directly to a VM or physical server or numerous VMDKs. In an enterprise situation where you are replicating things at a SAN level, you may not want to have LUNs larger than 1 – 2TB if possible just because it would take a while to replicate. Also, you may want to break up your LUNs and datastores very intentionally as there may be some VMs in your environment that you do not wish to replicate – but, if they live in the same datastore as ones that you do want to replicate, well, they’re replicating.

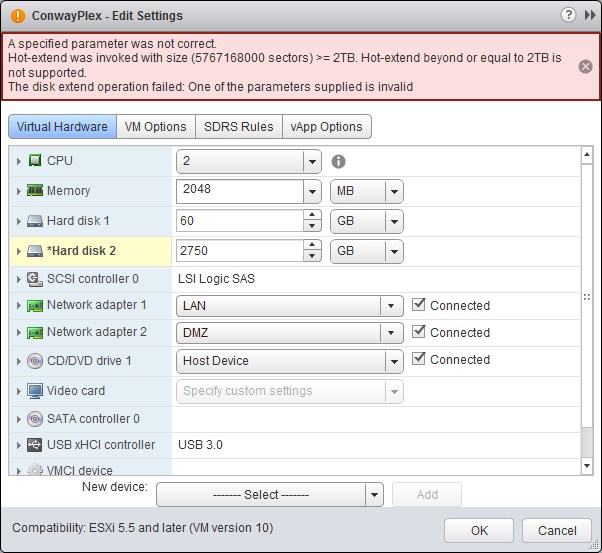

So, with all that said, last week was the first time I created a VMDK that was over 2.0TB. It happened to be on my home server. I have a Plex server that stores all my media and as I moved more and more data from optical disc to my disk-based storage it wasn’t long before I had reached that 2.0TB point. No big deal – right? Just a typical situation of extending a disk. Make sure there’s enough space on the datastore, edit the VM to increase the disk size, and then extend the volume in Windows. Except, this happened:

Wasn’t expecting that! Turns out VMware does not make mention of this in this KB – FAQ on VMware vSphere 5.x for VMFS-5 nor on this KB – Block Size Limitations of a VMFS Datastore. They do address the issue in this KB – Support for Virtual Machine Disks Larger than 2TB but only buried in the middle of the text. It reads:

Virtual machines with large capacity disks have these conditions and limitations:

- [stuff and things]…

- You cannot hot-extend a virtual disk if the capacity after extending the disk is equal to or greater than 2 TB. Only offline extension of GPT-partitioned disks beyond 2 TB is possible.

- [more stuff, more things]…

Well, that’s something! All of these years I’ve never had to create a VMDK over 2.0TB, and would just tell people “Yeah, it’s no problem, just upgrade or migrate your data to a VMFS-5 datastore and you’ll be fine” when in reality that doesn’t come without some issues. The keyword above is “hot-extend”. One of the most used, daily operations of ESXi is bumping space in a VM while the VM is on. We do it constantly. We rely on it! So, once our VMs start to go over 2.0TB with VMDKs or RDMs we’ll no longer be able to do this without downtime and will have to power down the VM to extend the disk. Bummer!

I haven’t tried it yet but I did hear of a trick or work-around. I cannot find the reference right now, but I read a post by someone on the VMware Community boards stating that you can detach the virtual hard disk from the current VM, attach it to a powered-off VM, increase the size of the disk there, then plop it back down on the intended VM and extend the filesystem within the OS. Again, I haven’t tried it, and it is kind of janky considering you need to have a powered-off VM laying around… but it’s something. The only thing is, you’re not going to want your first tier operations guys dropping hard disks from VMs and re-attaching them and such. But I suppose this is a case of taking what you can get. This may be (and probably is) old news to a lot of you so if that’s the case I apologize! It’s just been my practice to not have VMDKs so large.

Feel free to comment with suggestions on workarounds or solutions!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

I am a Sr. Systems Engineer by profession and am interested in all aspects of technology. I am most interested in virtualization, storage, and enterprise hardware. I am also interested in leveraging public and private cloud technologies such as Amazon AWS, Microsoft Azure, and vRealize Automation/vCloud Director. When not working with technology I enjoy building high performance cars and dabbling with photography. Thanks for checking out my blog!

August 25, 2017

Hello Dear, are you in fact visiting this web

page regularly, if so then you will definitely take pleasant know-how.